Social and hUman ceNtered XR

–

Where physical and virtual worlds meet

Feeling brave enough?

explore Sun in a different and unique way.

An immersive and navigable, like in a videogame, extension of the SUN XR website facilitating a comprehensive exploration within the three-dimensional space and allowing users to explore the pilots scenarios and achievements.

Are you ready? click the button on the right

What is SUN?

–

The Social and hUman ceNtered XR (SUN) project aims at investigating and developing extended reality (XR) solutions that integrate the physical and the virtual world in a convincing way, from a human and social perspective. The virtual world will be a means to augment the physical world with new opportunities for social and human interaction.

Your content goes here. Edit or remove this text inline or in the module Content settings. You can also style every aspect of this content in the module Design settings and even apply custom CSS to this text in the module Advanced settings.

SUN’s Key Exploitable results

–

KER01 – SUN Integrated Platform

The SUN platform ecosystem provides system integrators and XR development teams with a modular, ready-to-use foundation for domain-specific XR solutions that are human-centric. It cuts down high initial R&D costs, and streamlines the integration of advanced XR functionalities, thus reducing time-to-deployment and overall project expenditure.

KER02 – Automatic Avatar Production Pipeline

The Automatic Avatar Production pipeline enables XR developers to access realistic avatars, on demand, in a scalable, automatic, fast and cost-efficient manner, without the need of specialised know-how and artistic skills. The avatars are interoperable and reusable in all applications across domains (fashion, healthcare, gaming, wellness, etc)

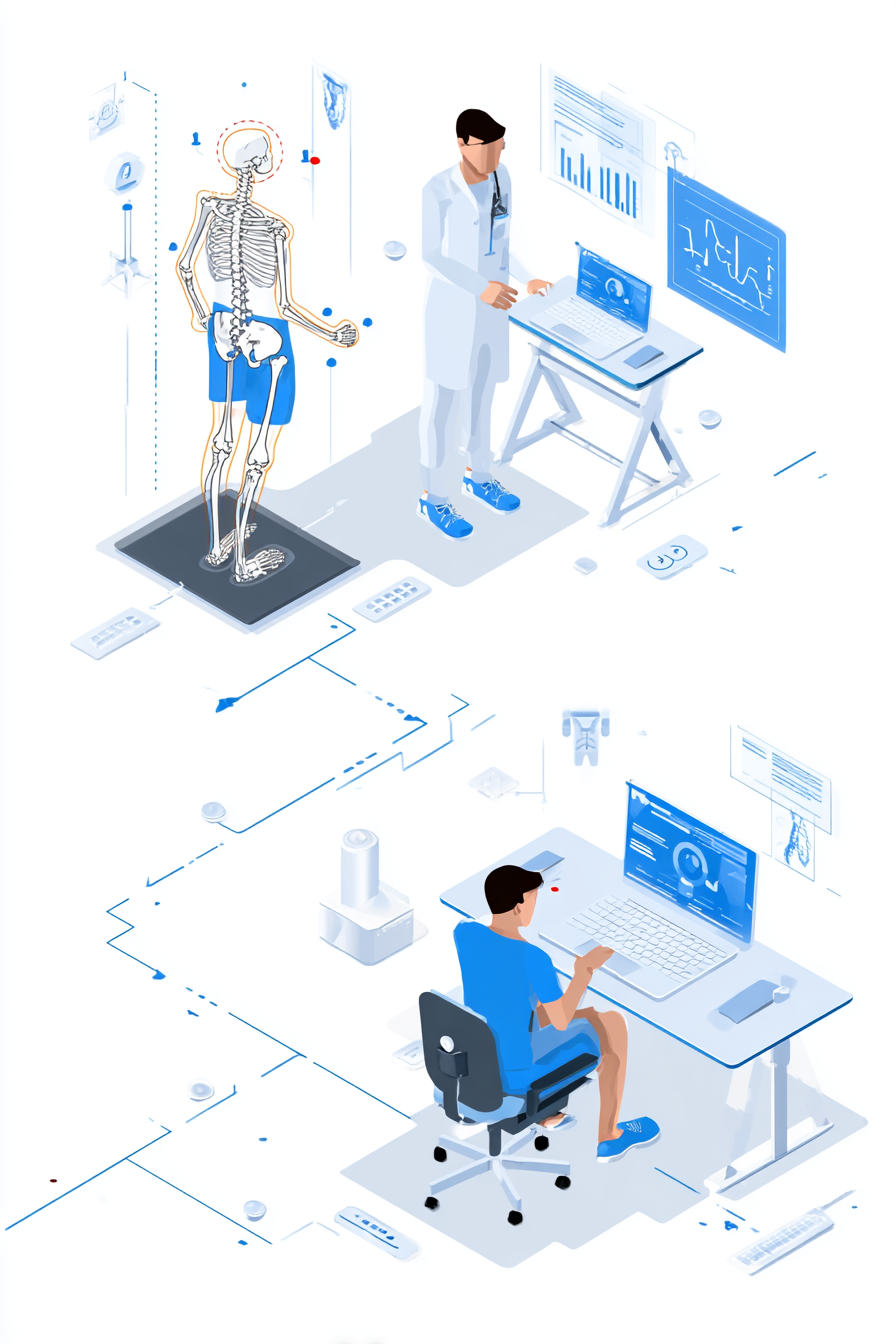

KER03 – A medium-density EMG and IMU interface for gait rehabilitation and movement analysis.

The medium density EMG interface allows doctors to get quantitative muscle activation information in real-time and as summary report at the end of rehabilitation sessions enabling them to provide optimal personalised exercises, feedback, and motivate patients, while requiring only a couple of minutes of set-up time.

KER04 – Tokenized Platform

The Tokenized Platform exploits the blockchain technology (like NFTs) and data space concept for offering services and tools able to manage digital assets in a secure and transparent way, enabling the definition of access policies, revenue models and collaborative mechanisms for the redistribution of contents.

KER05 – XR Threat Detection

XR Treat detection reduces the risk of reputation damage and increases the value of XR products by detecting and stopping, also in real time, several security threats.

KER06 – Hololight Stream

Hololight Stream unlocks limitless XR performance by streaming high-fidelity, real-time visuals from powerful servers to any OpenXR-compatible device, bypassing hardware limits. With enterprise-grade security and seamless integration into leading engines like Unity, Unreal, VRED, and Omniverse, it delivers uncompromised, secure 3D experiences anywhere.

KER07 – Wearable thermal feedback device for somatosensory-impaired users

The wearable thermal feedback device is able to restore sensations of touch and caress and provide a more immersive XR experience through accurate and localised thermal stimulation.

KER08 – Automated Posture Assessment

The Automatic Posture Assessment enables accurate, real-time postural assessment from anywhere, allowing clinicians to remotely monitor and evaluate rehabilitation exercises with high precision. The solution can be easily integrated with other XR systems/applications or clinical workflows, providing a scalable, evidence-based tool for data-driven rehabilitation.

KER09 – Finger Haptic Devices

The Finger Haptic devices are mature prototypes that can provide best of class finger haptic feedback while being compliant with modern VR devices and can thus become a solid basis for a new wearable product line.

KER10 – OmniBridge

OmniBridge is a middleware for real-time, secure data exchange and orchestration between distributed XR components, offering XR and IoT platform developers a flexible and scalable solution for achieving an efficient decentralized integration of heterogeneous components.

KER11 – Dense Vision-Language Models for Open-Vocabulary Understanding

These novel multimodal models enable precise, natural-language interaction with images and 3D content/scenes by generating dense, pixel-level semantics in real time. TheyIt can enable new training-free

KER12 – Advanced AI-driven 3D design, reconstruction, processing and optimization

The methods to enhance 3D processing pipelines significantly reduce the time and effort required to produce high-quality, usable assets or to improve existing ones in terms of quality and performance.

KER13 – Policy Paper on Ethics

The policy paper on ethics builds upon benchmark research on the legal and ethical framework for XR technologies and provides a regulatory and compliance framework for those developing XR applications.

KER14 – Multimodal emotion recognition

State-of-the-art multimodal emotion recognition by fusing RGB facial analysis with wearable physiological data. The combined signals dramatically improve reliability over unimodal approaches, making the component an advanced tool for emotion-aware interfaces, affective computing research, and personalized adaptive systems.

KER15 – Emotion recognition dataset

The dataset provides reliable ground truth for training and evaluating novel emotion recognition models and can thus significantly accelerate research on emotion recognition, human–computer interaction, and personalized adaptive systems.

KER16 – Task optimization component

Reference model for task management in a manufacturing shop floor using a heuristic-based algorithm that prioritizes manufacturing tasks based on predefined criteria to optimize resource allocation and task execution

SUN’s Solutions

Scalable and cost-effective

- Use AI to incrementally learn and acquire from the physical world

- Learned items will be maintained in the sun platform and made reusable

Mixing of physical and virtual world

- Objects in the physical world will have digital twins with physical and semantic properties

- AI to give virtual objects the same behaviour than the physical ones

Plausible human interaction

- Wearable haptic interfaces

- Multisensory feedback with 3D objects

- Gaze and gestures based interaction via AI and computer vision

Surpass device resource constraints

- AI and generative solutions to provide high-quality rendering also in presence of coarse-gained, low resolution and missing parts

SUN Objectives →

–

Explore how SUN intends to study the interactions between the physical and the virtual world. Check out our six main objectives

Newsletter

“The project will contribute to human-centred and ethical development of digital and industrial technologies, through a two-way engagement in the development of technologies, empowering end-users and workers, and supporting social innovation, including end-users from the very beginning through the co-creation of scenarios, and later on gathering their feedback through the pilots demonstrating new uses of XR in the field of industry (ameliorating safety and capability of workers), rehabilitation, and inclusive communication”

Subscribe to SUN XR’s newsletter and keep yourself updated to our latest news.

Contacts

–

Project Coordinator: Institute of Information Science and Technologies – National Research Council CNR (IT)

Giuseppe Amato

Email: